Note: To be clear, I am not accusing AirBnb or Facebook – or anyone else – of allowing this. According to my understanding of their Acceptable Use Policies and the law, this is way out of bounds and likely to result in trouble for all involved. Do not do it.

Updated March 2018: I have confirmed that Airbnb is doing this.

A few years ago, I wrote a series called “Social Media for Social Evil” which was a followup to my “Open Source Intelligence” series where I talked about how social media could be misused for everything from online impersonation to cracking someone’s banking questions. Those posts became a conference presentation where I would “live social engineer” an audience volunteer.

I wrote about these issues because a number of groups had been actively using these attacks for years. I detected and observed a number of them. My goal was to get the word out to warn and equip people.

A New Angle of Attack

In this case, I want to talk about how someone can use similar tactics to actively cause damage in potentially undetectable ways. Last week, someone released a proof of concept using the API for 23andMe’s DNA database [archived] to grant or block access based on race, gender, or other aspects. While there are legitimate use cases – like a forum for battered women, filtering out men – the more questionable use cases are easier to imagine.

There’s a problem with this approach though. According to their site, 23andMe has just over a million members and with the cost of the testing kit ($99 each), their growth will be slow. More importantly, at that price and based on their marketing, SEO, etc, their demographic is likely upper middle class to affluent white men in the US. In short, they’re uninteresting. Even worse, how many of them would use authorize a site to use their 23andMe data?

We need a site with more users and more ubiquitous on the web but with similar demographic information.

Facebook to the Rescue!

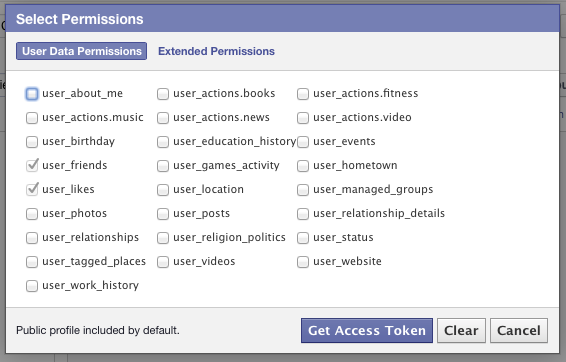

Let’s consider a common scenario: you want to sign up for a site and instead of requiring a new username and password, it allows you to sign in with Facebook. Or maybe it allows you to “share with friends” or “see your friends’ recommendations” like AirBnb, Hulu, and thousands of other sites do. Of course, the site needs access to your friends list but also requests access to your “Likes.” It’s nothing significant, so you agree.

Behind the scenes, these are the only permissions necessary for the developer to choose are:

Strictly speaking, ‘user_friends’ isn’t necessary for the attack I’m about to lay out but it could be useful later. But notice that I don’t need your ‘user_status’ or ‘user_religion_politics’ or anything else that is obviously sensitive. I just need your Likes so I can make good recommendations. Yes, that’s totally the reason.

As early as 2009, MIT demonstrated that it was possible to build a “gaydar” (or gay radar) based on your connections and who is connected to who. More recently (2013), a group at the University of Cambridge could determine political affiliation, drug usage, and even race or ethnicity:

“Computer software inferred with 88% accuracy whether a male Facebook user was homosexual or heterosexual – even if that person chose not to explicitly reveal that information. It had a 75% accuracy rate for predicting drug use among Facebook users, analysing only public “like” updates.”

On the positive side, since the site knows your political leanings and ethnicity, maybe they can make television or book recommendations for you. Odds are those recommendations will be somewhere between “okay” and “amazing.” Or – as in the original example: detecting for a battered women’s forum – the site may be able to validate that you have the likes of an 18-35 year old woman, you recently changed your relationship status, and you also have the likes of someone with a small child. This is a powerful and potentially legitimate use for this sort of data.

On the negative side, imagine a site with forums dedicated to insulting certain ethnicities or political leanings. By simply filtering the forum list and blocking access to those discussion threads – for all intents and purposes – those threads don’t exist. Or alternatively, imagine an unscrupulous user on AirBnb who wanted to exclude [choose: white, black, Hispanic, gay, Christian] people from renting. It would be trivial to always mark the chosen dates as “taken” and never let the user book a stay. It would be somewhere between challenging and impossible to prove wrongdoing.

Now let’s sketch up some code to get rolling. In this case, I’ll use the PHP library but you can use any. Ignoring my excessive commenting, it’s under 30 lines of code:

This isn’t a complete solution as even with the data, there are two steps involved. I still need to rate/categorize the data to identify which patterns are acceptable vs unacceptable and then I need to wire that into my business logic.

For the rating step, I considered using the Alchemy API or similar, but I realized it’s easier than that. For example, if someone’s “Likes” consist of:

- Barack Obama, Hillary Clinton, and Planned Parenthood; or

- George Bush, Rush Limbaugh, and Heritage Foundation; or

- the Packers, Cheese, and Brett Favre;

I can probably draw safe conclusions about political, religious, or other leanings. From there, it’s just adjusting my site to behave in ways to take advantage of these insights.

So what’s next?

While this is trivially easy to do, DO NOT DO IT. You are definitely breaking their Acceptable Use Policies and possibly breaking the law.

Once again, I write about these things because a) they’re possible and b) they’re likely already happening. As a result, we need to be aware of what information we’re sharing about ourselves, how it’s being used, and how it might be used for good or evil purposes.

The next time you think the privacy settings of one of these sites are protecting you, understand that the only thing that can protect you and your information is YOU.

Nice. Though using this script, the user would still have to opt-in to using the app, so there needs to be a bit more.

That’s why something with a huge user base – Hulu, AirBnb, a major job site – would be ideal.